Call or Text: +1 (208) 425-2990

Email: Sales@DroneSprayPro.com

AI in Multispectral Imaging for Pest Detection

Share

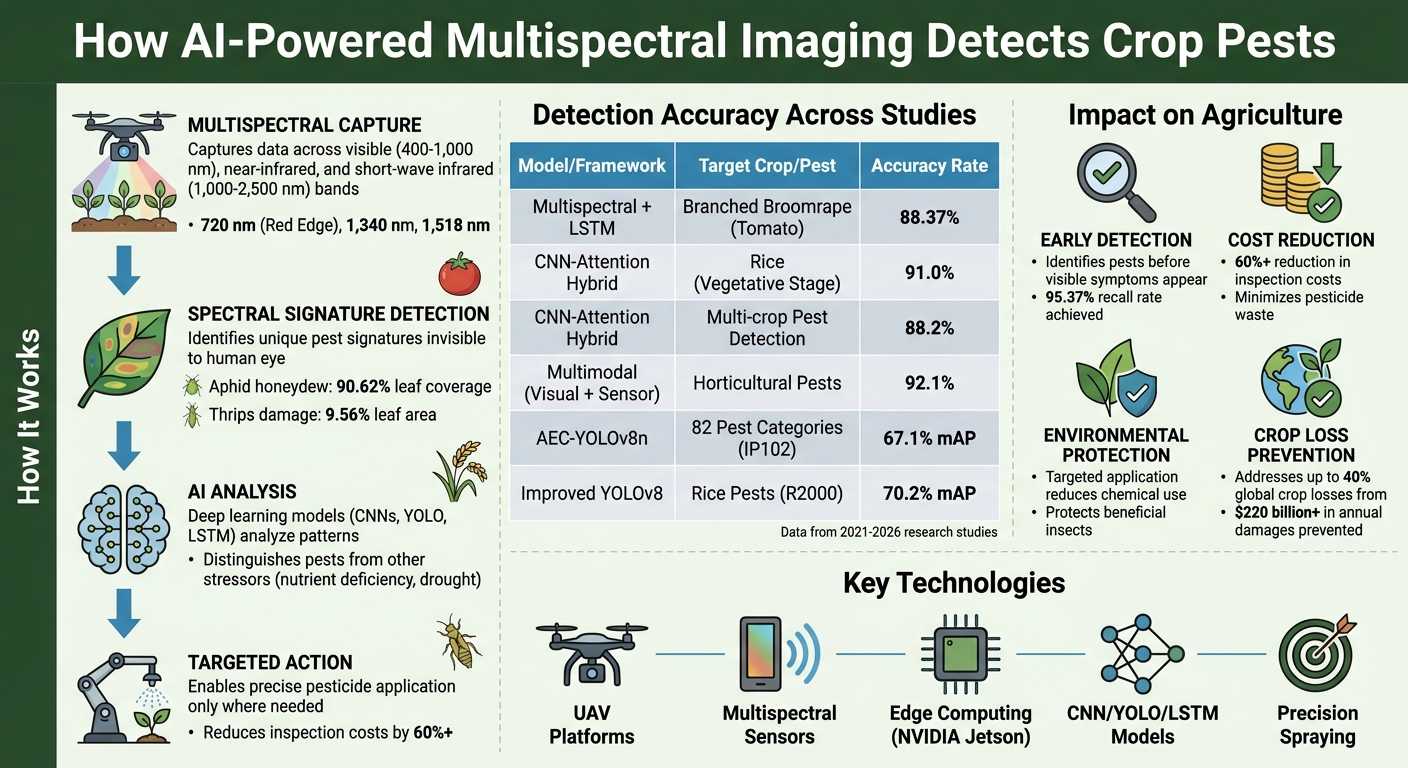

Multispectral imaging combined with AI is transforming agriculture by identifying pests and crop stress before visible symptoms appear. Here's how it works:

- Multispectral Imaging: Captures data across visible and non-visible wavelengths (e.g., near-infrared, short-wave infrared) to detect plant stress and pest activity.

- AI Analysis: Deep learning models analyze spectral signatures to distinguish pests from other stressors, achieving accuracy rates of 88%-91%.

-

Key Benefits:

- Early pest detection reduces crop losses.

- Drone-based systems, such as the DJI Agras T50 Sprayer Drone, cut inspection costs by over 60%.

- Targeted pesticide application minimizes waste and environmental impact.

Real-world applications include:

- Detecting branched broomrape in tomatoes with 88.37% accuracy.

- Monitoring rice pests with hybrid CNN-attention models, achieving 91% accuracy.

AI-powered multispectral imaging is advancing precision agriculture by enabling smarter pest management and reducing chemical use.

How AI-Powered Multispectral Imaging Detects Crop Pests: Process and Performance

How Multispectral Imaging Detects Pests in Crops

Detection Beyond Visible Light

Multispectral imaging works by capturing data across visible light (400–1,000 nm), near-infrared, and short-wave infrared (1,000–2,500 nm) bands [4]. This broad range is essential because pests leave behind unique "spectral signatures" that can only be spotted in wavelengths beyond normal human vision. These signatures come from factors like leaf damage, changes within the plant, and pest byproducts such as aphid honeydew or thrips feces [4].

"HSI [Hyperspectral Imaging] can be used to automate the detection of pest damage on plants and to assess damage unobservable via manual methods, such as systemic changes in plants."

– Springer Nature, Plant Methods [4]

A great example of this technology in action comes from the Julius Kühn Institute (JKI) PhenoScan Platform study conducted between 2021 and 2022. Using a dual-camera system (HySpex VNIR-1800 and SWIR-384me), researchers monitored bell pepper plants (Bedingo F1) and achieved over 80% precision in differentiating between Myzus persicae (aphids) and Frankliniella occidentalis (thrips) on single leaves. The study revealed that aphids typically covered 90.62% of the leaf area with honeydew, while thrips caused damage to 9.56% of the leaf area. Specific wavelengths - particularly 1,340 nm and 1,518 nm - proved critical in identifying pest feeding patterns [4].

Additionally, the Red Edge region (around 720 nm) is highly effective for detecting early biotic stress, such as changes in chlorophyll levels, long before physical symptoms like wilting or discoloration appear. Meanwhile, SWIR wavelengths (1,340–1,518 nm) excel at identifying chemical byproducts that are nearly impossible to spot through manual inspection [4]. This capability not only allows for early detection of pest activity but also supports the development of advanced AI tools to distinguish pest-related stress from other types of plant stress.

Separating Pests from Other Crop Stressors

Multispectral imaging doesn't just detect pests - it also distinguishes their damage from other stressors, like nutrient deficiencies or environmental factors. Each type of stress creates a unique spectral pattern. For example, thrips feeding produces distinct signals at 720 and 955 nm, while nitrogen deficiency results in a completely different spectral response tied to declining chlorophyll levels [4].

Advanced hybrid models, such as CNN-attention systems that combine multispectral and thermal data, have pushed detection accuracy even further. One model achieved 91.0% accuracy in detecting infections in rice during its vegetative stage and 88.2% accuracy in identifying pest infestations across multiple crops, including maize, rice, wheat, tomato, and cassava [3]. When combining data sources, early detection sensitivity reached 88.1% for rice and 87.3% for maize [3].

A key factor in this precision is accurate leaf segmentation, which removes background noise such as soil or greenhouse structures [4]. This refined analysis enables AI models to provide highly specific alerts, allowing farmers to shift from broad pesticide applications to targeted treatments. This targeted approach not only reduces chemical usage but also helps prevent significant crop losses by catching infestations early.

sbb-itb-3b7eef7

AI Models for Pest Detection

Machine Learning and Deep Learning Applications

Building on the potential of AI-enhanced multispectral imaging, advanced deep learning models are now pushing detection capabilities to new heights. These models, particularly Convolutional Neural Networks (CNNs) and Vision Transformers (ViTs), have outpaced traditional pest detection methods. Their strength lies in their ability to automatically learn intricate features from complex datasets.

"Deep learning approaches, notably Convolutional Neural Networks (CNNs) and Vision Transformers (ViTs), offer significant improvements over traditional approaches by autonomously learning hierarchical feature representations from large-scale image data."

– Plant Methods Journal [6]

Among these, YOLO (You Only Look Once) models - such as YOLOv5, YOLOv8, YOLOv11, and YOLOv12 - are particularly favored for agriculture. These one-stage detectors strike a balance between speed and accuracy, making them ideal for real-time applications like drone-based monitoring. On the other hand, two-stage models like Faster R-CNN deliver higher precision but demand more computational power, which can limit their practicality in field settings [6].

For early-stage detection, Long Short-Term Memory (LSTM) networks analyze multispectral data over time, tracking changes in crop canopy reflectance. This allows them to identify underground pests and parasites before visible symptoms emerge [5].

Moreover, advanced frameworks now incorporate attention mechanisms - such as Multi-Scale Spatial Attention (MSPA) and Hierarchical Scaled Dot-Product Attention (HSDPA). These mechanisms help AI models focus on critical features, effectively distinguishing pests from background elements like soil or plant debris, even when pests closely resemble one another. This evolution in model architecture has led to impressive accuracy levels in recent studies [6].

Accuracy Metrics and Research Results

The capabilities of these advanced models are reflected in their performance metrics. For instance, the AEC-YOLOv8n model achieved a 67.1% mean Average Precision (mAP@0.5) on the IP102 benchmark dataset, which includes 82 pest categories [6]. Similarly, improved YOLOv8 models designed for rice pest detection reached 70.2% mAP@0.5 on the R2000 dataset [6].

Multimodal approaches, which combine multispectral imaging with environmental sensor data, have taken accuracy even further. In December 2025, researchers Chuhuang Zhou and Min Dong conducted a field experiment in the Hetao Irrigation District, Inner Mongolia. Their system monitored grape, tomato, and sweet pepper crops, integrating visual data with electrical sensors like MG-811 gas sensors (for CO2 and volatile organic compounds) and DHT22 humidity sensors. Using a convolution–Transformer hybrid encoder, their framework achieved a remarkable 92.1% accuracy, 93.5% precision, and an F1-score of 0.923 [7].

| Model / Framework | Target Crop/Pest | Key Metric | Result |

|---|---|---|---|

| Multispectral + LSTM | Branched Broomrape (Tomato) | Overall Accuracy | 88.37% [5] |

| Multimodal (Visual + Sensor) | Horticultural Pests | Accuracy | 92.1% [7] |

| AEC-YOLOv8n | IP102 Benchmark (82 classes) | mAP@0.5 | 67.1% [6] |

| Improved YOLOv8 | Rice Pests (R2000) | mAP@0.5 | 70.2% [6] |

These results highlight the impressive detection capabilities of AI models, with accuracy rates often ranging from 88% to 95%. Beyond just identifying pests, these models can detect infestations early - often before human scouts notice any visible signs. This is especially critical given that pests and plant diseases are responsible for up to 40% of global crop losses each year, resulting in economic damages exceeding $220 billion [6].

Challenges and Solutions for Adoption

Technical and Computational Barriers

Farmers face significant challenges when it comes to using AI-powered multispectral imaging. A key issue is the sheer complexity of multispectral data combined with the limited availability of labeled datasets. These sensors produce massive amounts of data - just one image can be as large as 3.5 GB - but farms often lack enough annotated examples of pest infestations to train AI models effectively [4][5].

Another challenge lies in the imbalance of agricultural datasets. Healthy plants greatly outnumber infested ones, which can cause AI systems to miss rare but critical pest outbreaks. To address this, researchers like Mohammadreza Narimani and his team tackled the problem in September 2025 on a tomato farm in Woodland, Yolo County, CA. They used a combination of the Synthetic Minority Over-sampling Technique (SMOTE) and Long Short-Term Memory (LSTM) networks to monitor changes across five plant growth stages. This approach achieved an overall accuracy of 88.37% and an impressive recall rate of 95.37% [5].

Environmental variability adds another layer of difficulty. AI algorithms that perform well in controlled lab settings often struggle in real-world greenhouse environments. For example, a September 2022 study published in Plant Methods found that an XGBoost-based system could accurately detect aphids and thrips on individual leaves in the lab, but its performance dropped significantly in greenhouse conditions. To address this, researchers are now focusing on reducing the number of wavelengths analyzed to just 10–15 essential bands (e.g., 455, 720, 955 nm). This not only reduces the data volume but also makes sensor technology more practical for field use [4].

Despite these hurdles, drones are emerging as a promising tool to address many of these computational and environmental challenges.

Integration with Drone Platforms

Drones are proving to be a game-changer, offering practical solutions to many of the barriers associated with multispectral imaging. As highlighted in Scientific Reports, "Drones have emerged as a disruptive technology in this agroengineering space by enabling high-resolution surveying of vast farmland areas with minimal workforce" [8]. Modern drones equipped with multispectral sensors and edge computing devices, like the NVIDIA Jetson Nano, can process data on-site, eliminating the need for costly cloud-based infrastructure [8].

One innovative example is AgroVisionNet, which combines high-resolution drone imagery with IoT sensor data - such as temperature, humidity, and soil moisture - using TensorFlow Lite quantization. This integration produces visual heatmaps that clearly identify areas of pest impact [8].

For farmers across the U.S., including those in Idaho, companies like Drone Spray Pro are making this technology more accessible. Products like the DJI Agras series come equipped with multispectral sensors and edge computing capabilities, allowing farmers to detect pests in real time during routine field surveys. Additionally, the use of transfer learning on standardized drone platforms enables farmers to implement proven AI technologies without the need for custom-built systems [10]. These advancements make it easier for farmers to integrate AI and multispectral imaging into their daily operations, even in challenging field conditions.

Other Applications of AI in Crop Management

Targeted Pesticide Application

AI-powered multispectral imaging is transforming pest management by shifting the focus from reacting to infestations to preventing them. These systems detect and predict pest outbreaks early, allowing pesticides to be applied only to the affected areas instead of covering entire fields. This approach minimizes chemical use, reduces harm to beneficial insects, and helps protect the environment [9].

"With AI‐provided precision, pesticides could be applied only when and where needed... As a result, costs are lowered, pollution is limited, and the impact on global diversity is reduced"

– PLOS Sustainability and Transformation [9]

A great example of this is the Plantix app, which showcased this targeted method in India in January 2026. Farmers used the app to upload images for AI-based diagnoses of over 400 diseases across 40 major crops, achieving accuracy rates above 90% [9]. In the U.S., particularly in Idaho, drone platforms like those offered by Drone Spray Pro combine multispectral sensors with precision spraying systems. These drones can apply pesticides with incredible accuracy during routine field surveys.

AI doesn’t stop at pest control - it also helps detect other stress factors that can affect crop health.

Monitoring Abiotic Stressors

In addition to pests, AI-enhanced multispectral imaging is being used to identify abiotic stressors like drought, nutrient deficiencies, and soil imbalances. By analyzing spectral signatures, these systems can detect problems early, giving farmers the chance to address issues before they escalate [2]. Advanced tools like the MSX and MS5c integrate imaging data with environmental sensors to create detailed maps of crop health and resource needs.

From May 2023 to October 2024, researchers led by Chuhuang Zhou at China Agricultural University carried out a long-term study in Inner Mongolia’s Hetao Irrigation District. They used a multimodal deep learning framework on crops like grapes, tomatoes, and sweet peppers. By combining high-resolution imagery with data from 2.6 million samples of temperature, humidity, and gas concentrations, the system achieved an impressive 93.5% precision and an AUC of 95.7% for early pest and disease warnings [7].

"The application of multimodal monitoring networks in early pest and disease warning not only reduces chemical control input and ecological costs but also enhances market returns by ensuring yield stability"

– Chuhuang Zhou [7]

This technology also helps farmers fine-tune irrigation and fertilization. By pinpointing areas where stress indicators appear, farmers can apply water and nutrients more efficiently, cutting down on waste while improving crop yields. These advancements underscore the growing role of AI in modern agriculture, paving the way for smarter, more sustainable farming practices [1].

Conclusion

Key Takeaways from Recent Studies

AI-powered multispectral imaging has made noticeable strides in pest detection, boosting both accuracy and efficiency. For example, in June 2024, Mohammadreza Narimani led a study using drone-based multispectral imaging combined with LSTM networks and SMOTE. This approach achieved 88.37% accuracy and 95.37% recall in identifying branched broomrape on a tomato farm in Woodland, Yolo County, California [5].

"UAV-based multispectral sensing coupled with deep learning could provide a powerful precision agriculture tool to reduce losses and improve sustainability in tomato production."

– Mohammadreza Narimani, Researcher [5]

In another study from April 2026, a hybrid CNN-attention model analyzed 1,760 samples across six crops. It achieved 91.0% accuracy for rice during its vegetative stage and 88.2% accuracy for detecting pest infestations [3]. These studies highlight how combining AI with multispectral imaging not only sharpens accuracy but also speeds up early pest detection, paving the way for further advancements.

Future Trends in Pest Detection

The future of pest detection is heading toward real-time, scalable systems powered by AI on edge devices. Platforms like Drone Spray Pro are already integrating advanced AI with drones to enhance field monitoring and enable precise treatments, showcasing the potential of these technologies.

Another promising direction is multimodal data fusion, which will combine multispectral imagery with inputs from thermal sensors and environmental data such as temperature and humidity. This layered approach will provide a deeper understanding of crop health and improve detection accuracy. As datasets grow to encompass the estimated 5,000 global pest species, AI models will likely become more versatile and applicable across a wider range of crops and regions [9].

Smart Fight Against Bark Beetle: Drones, AI, and Multispectral Vision

FAQs

What’s the difference between multispectral and hyperspectral imaging for pest detection?

Multispectral imaging gathers data from a few selected spectral bands, often tailored to highlight specific characteristics such as plant health or the presence of pests. In contrast, hyperspectral imaging captures information across hundreds of narrow spectral bands, delivering highly detailed spectral signatures that allow for more precise pest identification. However, while hyperspectral systems offer greater accuracy, they tend to be more complex and costly, demanding advanced processing techniques and analysis.

How can AI tell pest damage apart from nutrient deficiency or drought stress?

AI leverages multispectral and thermal data to detect specific spectral and morphological patterns associated with pests. By employing advanced models, such as hybrid CNN-attention systems, it can analyze these patterns with precision. This enables the technology to differentiate pest damage from other problems, like nutrient deficiencies or drought stress, with impressive accuracy.

What equipment do I need to use AI multispectral pest detection on my farm?

To implement AI multispectral pest detection, you'll need a drone outfitted with multispectral sensors. These sensors typically include near-infrared, visible, and thermal imaging capabilities to gather detailed information about your crops. Alongside the drone, you'll also need AI processing tools - either hardware or software - to analyze the collected data. This analysis can happen directly on the drone or through a connected ground station.

For the most accurate results, make sure the AI software is customized to your specific crops and pests, using training data that reflects your unique agricultural conditions.